LangChain has quietly built the most capable stack for production AI agents. Between the graph-based orchestration of LangGraph and the new DeepAgents harness, the developer experience is elite.

But if you’re planning to self-host, there is a “licensing boundary” you need to know about before you commit to the architecture.

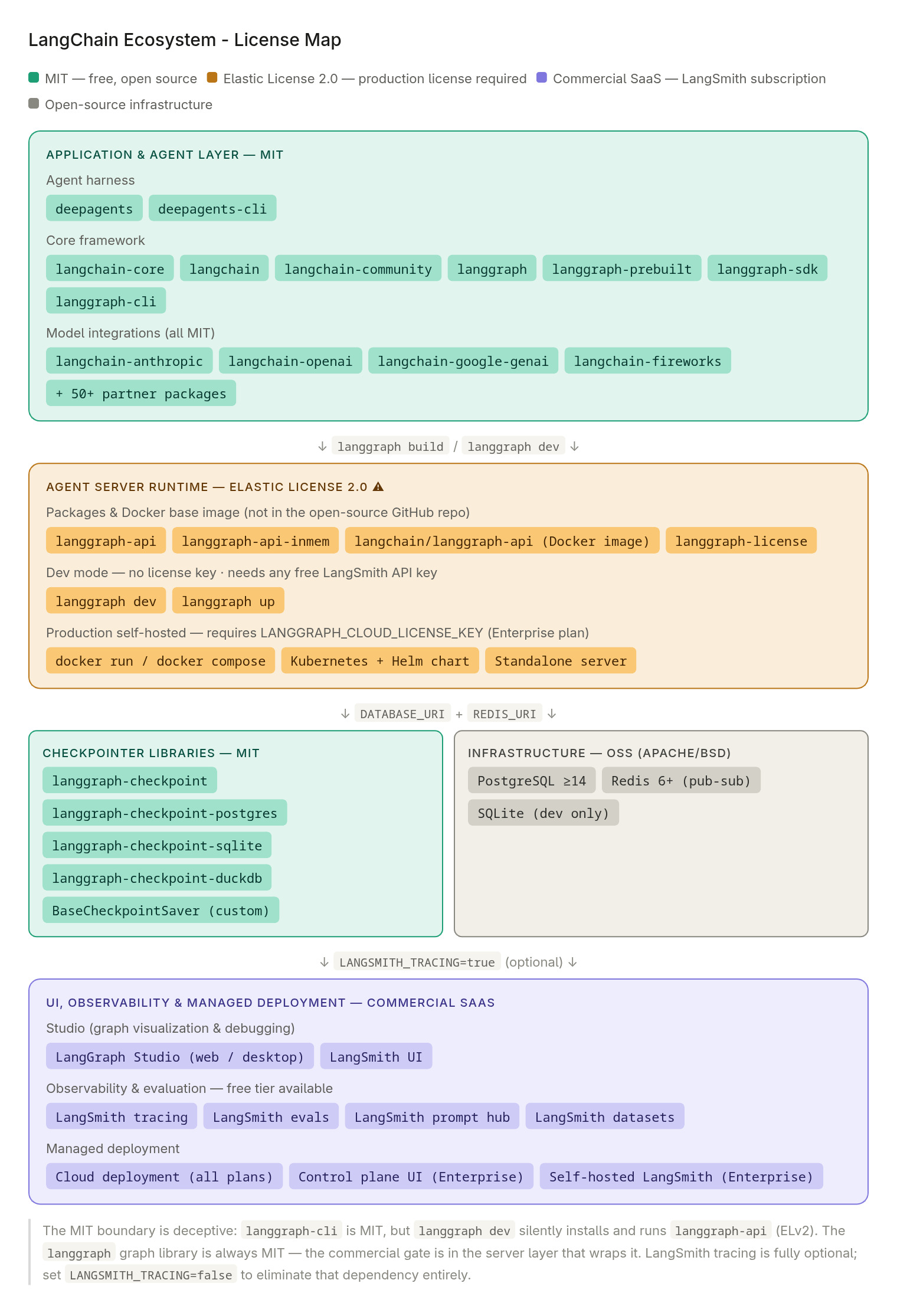

A breakdown of the LangChain ecosystem licensing boundaries.

A breakdown of the LangChain ecosystem licensing boundaries.

The Open Core Split

- The Framework:

langgraph,langchain-core, and model integrations are MIT-licensed. Genuinely free. - The Server: The moment you run

langgraph devorlanggraph build, you are usinglanggraph-api. This component handles the heavy lifting—HTTP, persistence, task queues, and streaming.

The Catch

langgraph-api is licensed under the Elastic License 2.0. To run this server runtime in production, you need a commercial license key, typically tied to an Enterprise plan. While you can develop for free on a LangSmith account, the actual “Agent Server” binary is not open source.

The Practical Consequence

If your team is strictly committed to a 100% OSS stack for compliance or cost reasons, the official “Agent Server” is off the table. You essentially have two paths forward:

- The Custom Route (MIT): Use the

langgraphlibrary to define your logic, but skip the official CLI. You’ll need to build a custom FastAPI wrapper to handle the persistence, API layer, and streaming yourself. - The Commercial Route: If the engineering overhead of building a custom server exceeds the cost of a license, you move to the LangGraph Platform (SaaS or Self-Hosted)—at which point you are no longer on a 100% OSS stack.

The open-core model is a fair way to build a business—LangChain is providing real infrastructure—but don’t let the “MIT” tag on the repo lead you to believe the entire deployment stack is exempt from commercial licensing.